Artificial intelligence has rapidly evolved from a theoretical concept to a tangible and transformative force that is reshaping industries, governments, and societies. Amid this technological revolution, Steve Bannon’s recent comments at the Semafor World Economy Summit highlight an essential and deeply complex aspect of AI’s proliferation—the potential influence of artificial intelligence on defense systems and weapons manufacturing. His remarks do not exist in isolation; rather, they echo a wider global anxiety about how innovation, automation, and computational decision-making may intersect with matters of national security, ethics, and strategic stability.

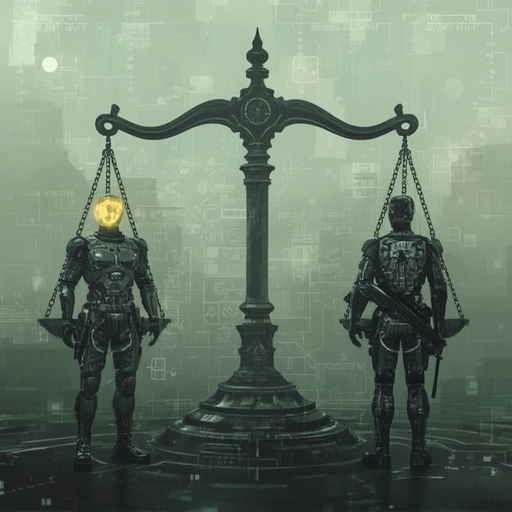

Bannon’s insistence on the need for oversight in this sector underscores an urgent reality: as AI capabilities advance, the boundaries between civilian innovation and military application become increasingly indistinct. Technologies originally designed to optimize data analysis, simulate human cognition, or streamline operations can, without careful supervision, be redirected to design autonomous weapons, enhance surveillance, or refine systems capable of lethal precision. This dual-use potential of AI introduces a profound dilemma—one that is simultaneously scientific, ethical, and political.

The central question posed by Bannon, and by policymakers across the world, is whether humanity can develop reliable frameworks to monitor and regulate AI’s integration into defense technologies before such systems outpace human moral judgment. Oversight mechanisms are notoriously slow to adapt, while innovation progresses at exponential speed. This mismatch creates an urgent need for transparent governance structures, global protocols, and ethical standards that transcend national competition.

Beyond immediate issues of control and compliance, the conversation extends to deeper philosophical implications. What does accountability mean when algorithms—not individuals—make decisions that may result in harm? How can responsibility be established when complex neural networks evolve in ways unintelligible even to their creators? These are not hypothetical scenarios, but pressing challenges that mirror the anxieties of modern technologists and ethicists alike.

Bannon’s call for reflection thus resonates as an appeal to harmonize progress with prudence. It challenges both industry leaders and public institutions to think beyond efficiency or profit and consider the enduring human consequences of technological acceleration. True advancement, in this sense, involves not only the creation of powerful systems but the cultivation of wisdom sufficient to wield them responsibly.

In essence, this discussion transcends the immediate discourse on AI and defense—it represents a test of humanity’s ethical maturity. Can innovation coexist with restraint? Can ingenuity be guided by conscience? The answers to these questions will determine whether artificial intelligence becomes a tool for safeguarding our collective security or a catalyst for unprecedented risk in the century ahead. #AIethics #DefenseTech #ResponsibleAI

Sourse: https://www.businessinsider.com/steve-bannon-backs-anthropics-pentagon-deal-rejection-2026-4