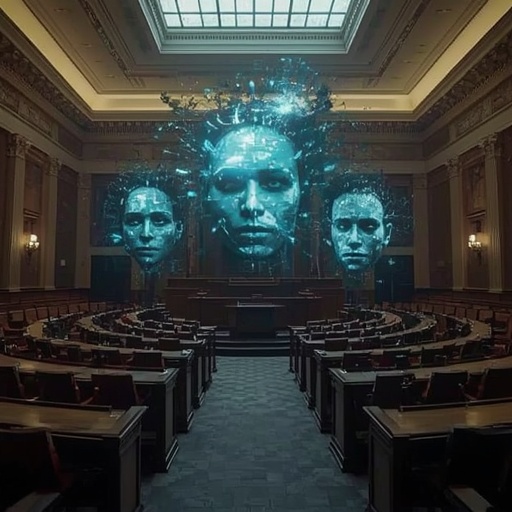

The convergence of scandal and artificial intelligence has ushered humanity into an era where the authenticity of what we see and hear can no longer be assumed. Deepfakes—crafted through advanced neural networks capable of reconstructing faces, voices, and entire scenes—demonstrate how digital deception is no longer the domain of science fiction but a pressing global reality. In modern society, where visual proof once carried unquestioned authority, such synthetic creations now challenge the very foundation upon which trust and accountability are built.

Legal battles are already revealing the disruptive potential of this technology. A single manipulated video or audio clip can shift public opinion, mislead investigators, or erode the credibility of legitimate evidence. Courts that rely on factual media must now confront a chilling possibility: that what appears genuine might, in fact, be digitally orchestrated with precision undetectable to the untrained eye. This transformation poses profound ethical implications not only for legal systems but also for journalists, corporate leaders, and citizens navigating a media environment saturated with content that can be tampered with at the click of a button.

The question, therefore, extends far beyond technological curiosity—it strikes at the heart of societal integrity. How can we maintain confidence in justice, journalism, and collective memory when artificial intelligence can so easily rewrite what is seen and heard? Only through heightened awareness, education in digital literacy, and the establishment of rigorous standards for verification can we hope to mitigate the corrosive effects of misinformation. As AI continues to evolve, so too must our vigilance, ensuring that in pursuit of innovation, we do not sacrifice truth itself.

Sourse: https://www.wsj.com/tech/jpmorgan-sexual-assault-lawsuit-ai-deepfake-rana-hajdini-cb373d09?mod=rss_Technology